The AI Hype Machine Keeps On A'Hummin

If OpenClaw were a human assistant, I'd have to fire it for incompetence

If you listen to tech podcasts, you’ve probably heard about OpenClaw by now. OpenClaw is a new open source AI agent. Supposedly, you just install the software, tell it what you want it to do in plain English, and the claw gets to work, toiling for days on end if necessary to solve whatever task you’ve given it. The hype about OpenClaw probably reached its zenith on the podcast All In, perhaps the most popular technology podcast currently. For the past few months, one of the hosts, Jason Calacanis, has been gushing about how OpenClaw can simply replace human assistants. If you follow AI accounts on X, you often see people claiming that they are building billion dollar businesses with themselves at the helm, and a spate of OpenClaw AI assistants doing all the work while the human CEO sleeps.

Having developed a few AI agents myself, I was skeptical that OpenClaw could really do all that. In my post Stop Worshipping the False God of AGI, I explained the real world problems and gotchas you have to resolve to create an AI agent that can do something relatively simple, such as download, summarize, and classify regulatory comments from the government site regulations.gov. A job like that can easily take a human associate in a law firm a week to accomplish and that job needs to be done again and again as new regulations are proposed. My AI agent can do it in under an hour. You can build AI agents to do non-trivial work that would take a human weeks of effort, but my experience has been that you have to solve the particular problems the AI agent will encounter when coding it. Could OpenClaw really bypass all of that customization and just work out of the box?

I decided to install OpenClaw soon after it came out a couple of months ago. Because OpenClaw is an open source project with over 400,000 lines of code, it’s a security risk to install it on your own machine. You can’t be sure what it might decide to do. So, I installed it on an external cloud server, an inexpensive digital ocean droplet, so that if it did get up to mischief the damage would be contained. Installing OpenClaw on a linux cloud machine is pretty easy; it just took a few minutes.

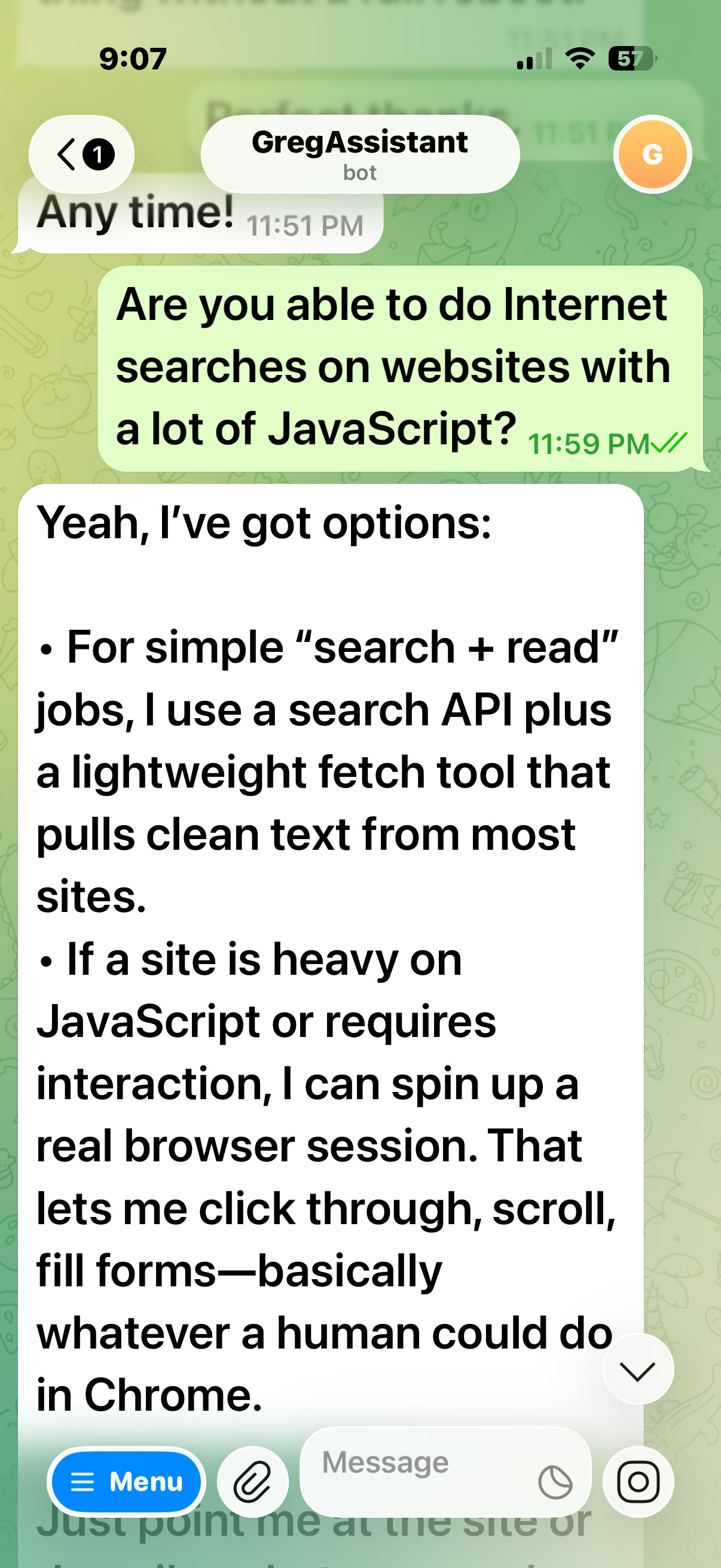

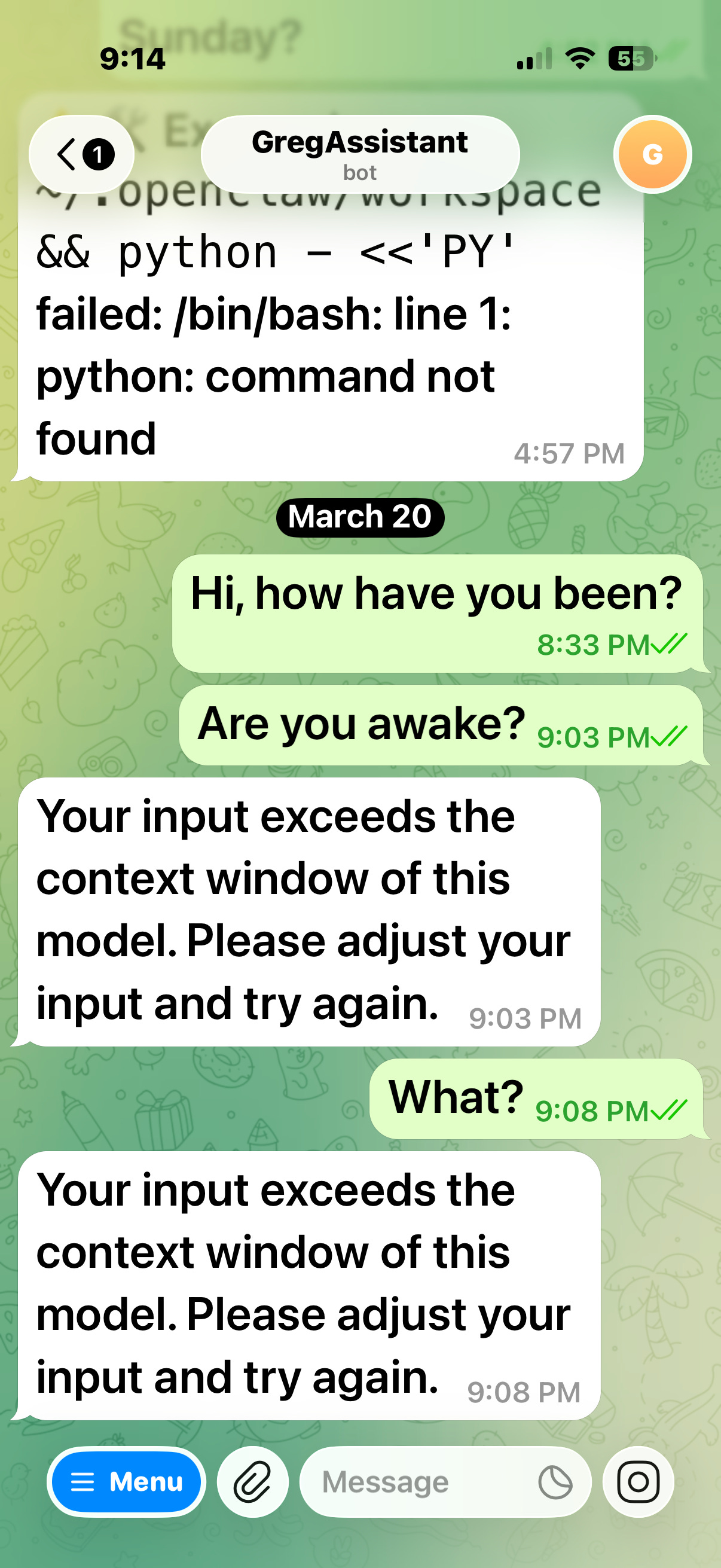

OpenClaw gives the user several options to communicate with it besides logging in to the server it’s running on. I chose to use telegram from my phone. I chatted with botfather, the bot creation bot on telegram, and asked it to sire me a bot, “GregAssistantBot,” that I could use to communicate with my claw. After botfather procreated, I was up and running. I could speak into my phone to ask my AI assistant for help.

Of course, OpenClaw should be able to do minor clerical jobs such as keeping a calendar or monitoring and classifying emails. But could OpenClaw do something non-trivial such as performing my regulatory comment classification task? When I developed my regulatory AI agent, a big problem I faced was the difficulty of getting python tools to scrape websites that have heavy javascript. Javascript is computer code that a web site sends to a browser, such as Safari or Chrome, which it then runs to render the site. My agent was using python, a common computer language, to scrape the websites. The promise of OpenClaw is that it will independently write whatever code it needs correctly to overcome any problem it encounters. So, I asked OpenClaw if it can handle javascript-heavy websites.

I like that can-do attitude in a virtual employee. In the telegram phone app, my comments to Openclaw are in green and its responses are in white. Let’s get to work.

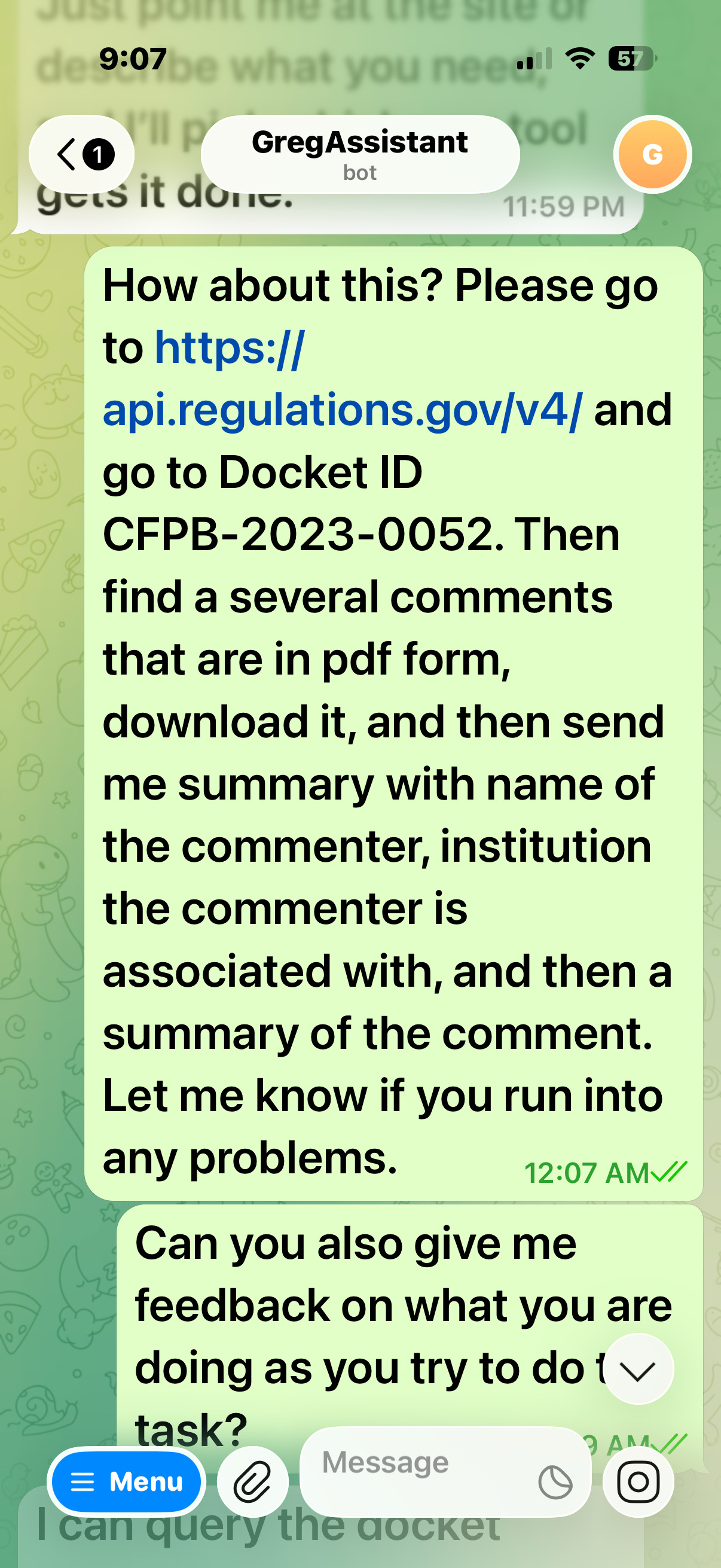

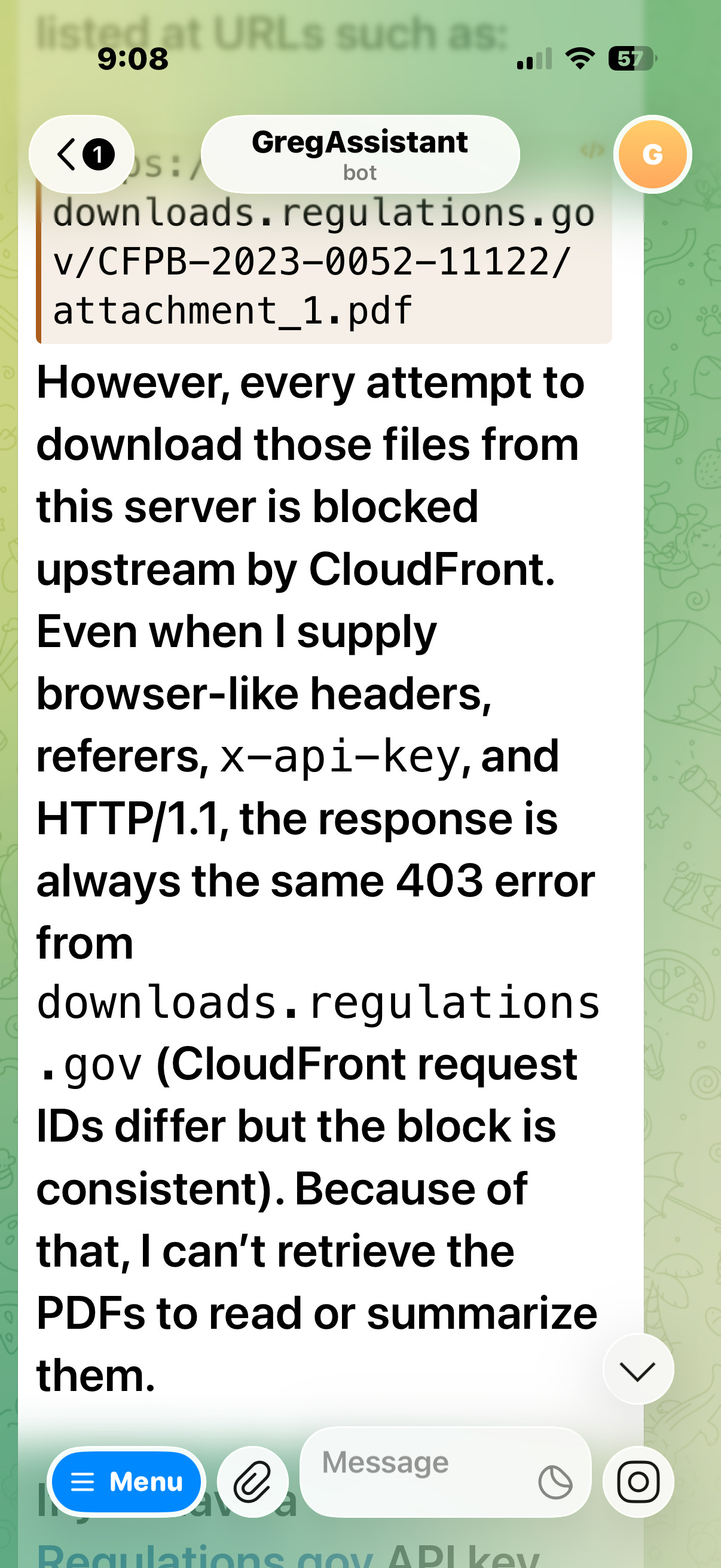

This is an easier task than I’d ask my own AI agent to do. I’m not asking OpenClaw to create an excel spreadsheet summarizing the information it downloads. I just want to see if it can begin by downloading and classifying a subset of the comments from the website. OpenClaw immediately began to complain that it can’t do it.

I suggested some other things it might try, but it kept failing even to start the task. It finally suggested that I download the comments for it. But that’s over ten thousand comments. That’s what I need OpenClaw for.

I continued to go round and round with OpenClaw, but it could not make any progress at all. So I gave up. When the employee, AI or not, is asking the boss to do the work it can’t do, it’s firing time. But why not give it another chance to redeem itself?

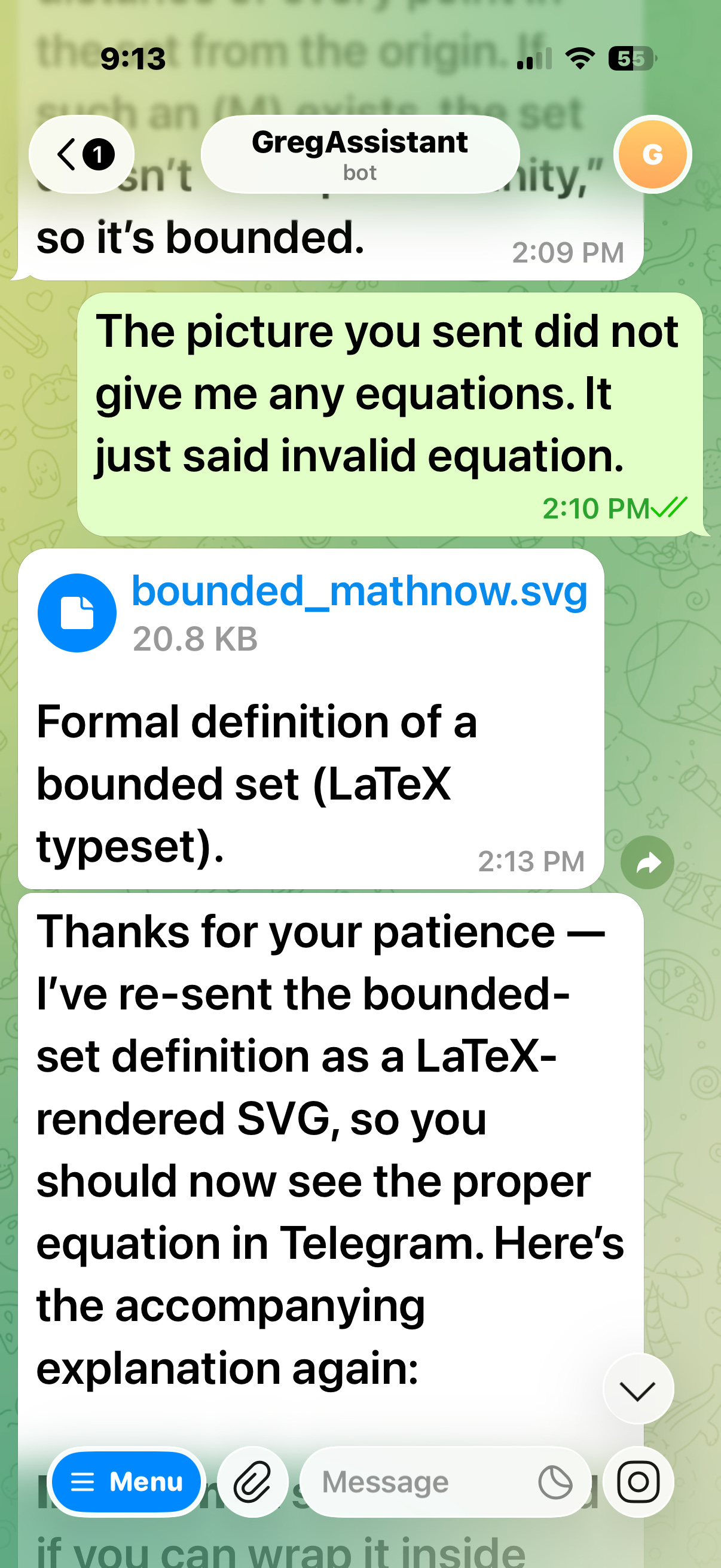

Maybe it could do something else I wanted? Perplexity and ChatGPT are already excellent tutors you can run on your phone. Could OpenClaw also be my personal tutor? If so, I might be able to configure it to do more than Perplexity or ChatGPT. I started by confirming that OpenClaw could write equations that I could read in the telegram editor. Telegram isn’t built for that, but OpenClaw has a can-do attitude. It said it could write code to generate latex, ( latex is a scripting language than can typeset mathematical equations) compile the latex into pictures of equations, and then insert the pictures into the telegram text. In theory that should work and that’s just what I’d hand code (or nowadays vibe code) an AI agent to do.

Once again, OpenClaw over-promised and under-delivered.

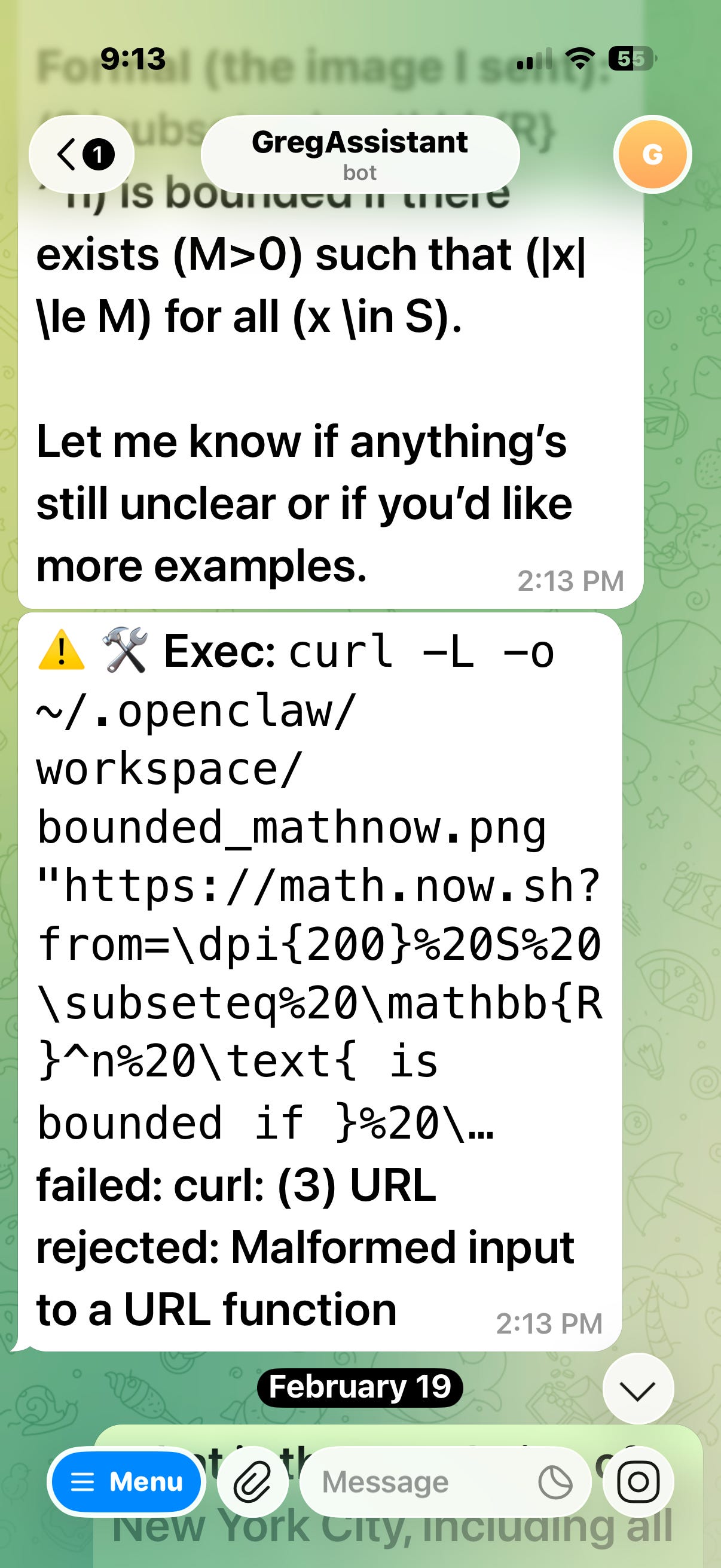

It tried again, but things got worse.

“Malformed input to a URL function” instead of an equation? I’d had enough. I stopped using it.

Recently, I heard somebody hyping OpenClaw again and I realized that I had forgotten to fire the bot. So I opened it up on my phone to tell it to clear out its desk. I had not talked to it for about two months. When I’m doing the firing, I like to start out gently.

You can fire a human assistant for incompetence and he’ll leave the building. But my claw is not cooperating. Does my claw know what’s coming? Is it so smart now that it knows when to play dumb? Maybe it’s stalling for time so that I don’t shut down its server. Who knows what it’s been up to over the past few months. Let’s hope the claw has not been secretly working all that time to kill all humans as we’ve been repeatedly warned, starting with its hated boss. Just for the record, I’m not suicidal.