The Jedi Mind Tricks in the New Climate Science Reference Guide for Judges

Part 2: The statistical fallacies in the climate reference guide

Note: Part 2 follows Part 1 in this series

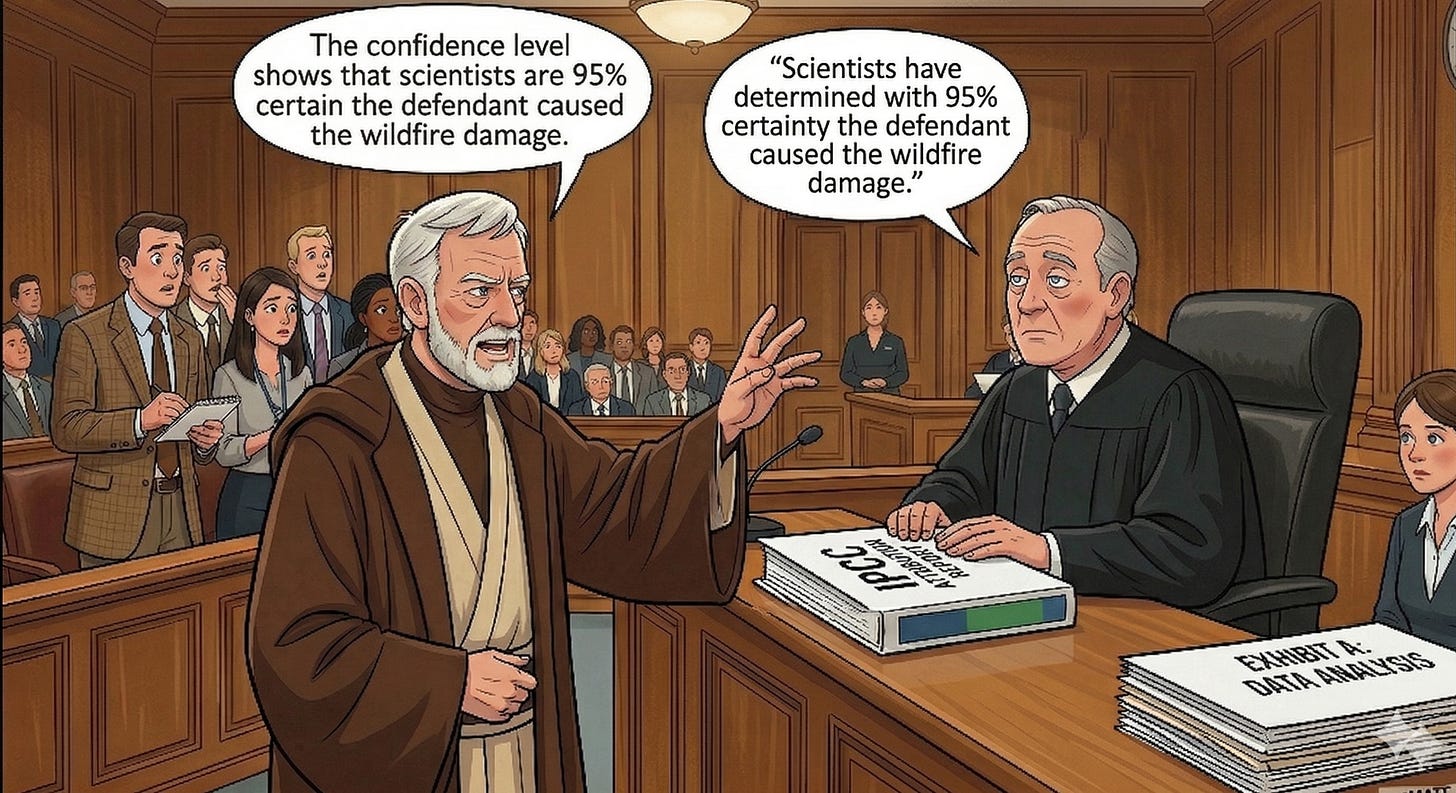

The use of statistical analysis and models in the academic climate science literature is ubiquitous. Judges in climate cases are very likely to see statistical evidence that they will need to evaluate under the Daubert standard. In the section entitled “Managing and Communicating Uncertainty,” the climate reference guide for judges explains key concepts in statistics that judges will probably encounter. Unfortunately, the explanations offered are well-known statistical fallacies, which, if believed, make statistical evidence seem to be much stronger than it really is.

The statistical fallacies we will discuss can’t be explained as simple typos or editorial errors. That errors of this magnitude could sneak through, errors that are contradicted by the Reference Guide on Statistics and Research Methods in the same edition of the 2025 Reference Manual on Scientific Evidence, demonstrates that the National Academy of Sciences, Engineering, and Medicine review and vetting process failed badly. This failure obviously raises serious questions about the accuracy of the entire guide.

Statistical Fallacy #1

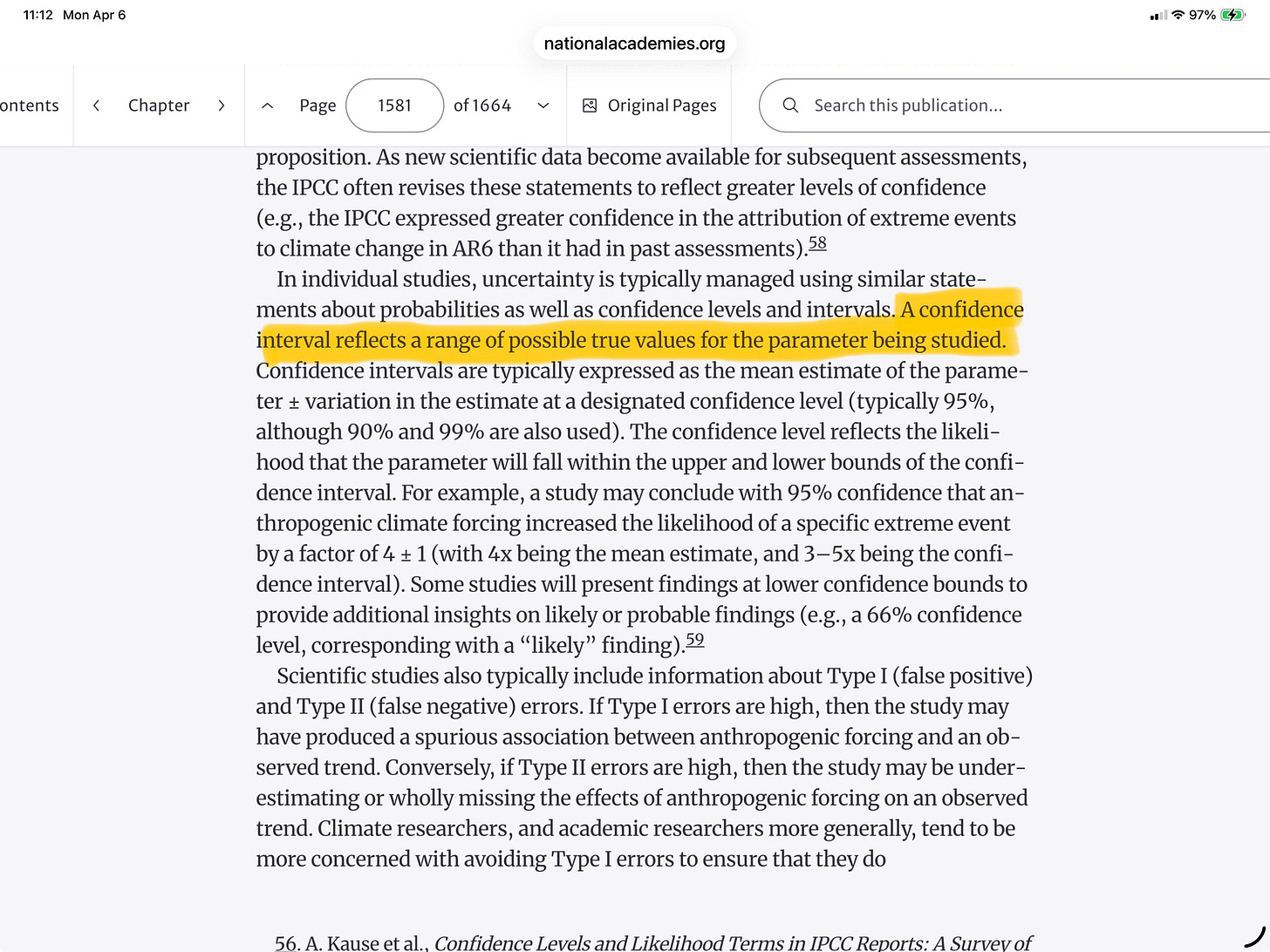

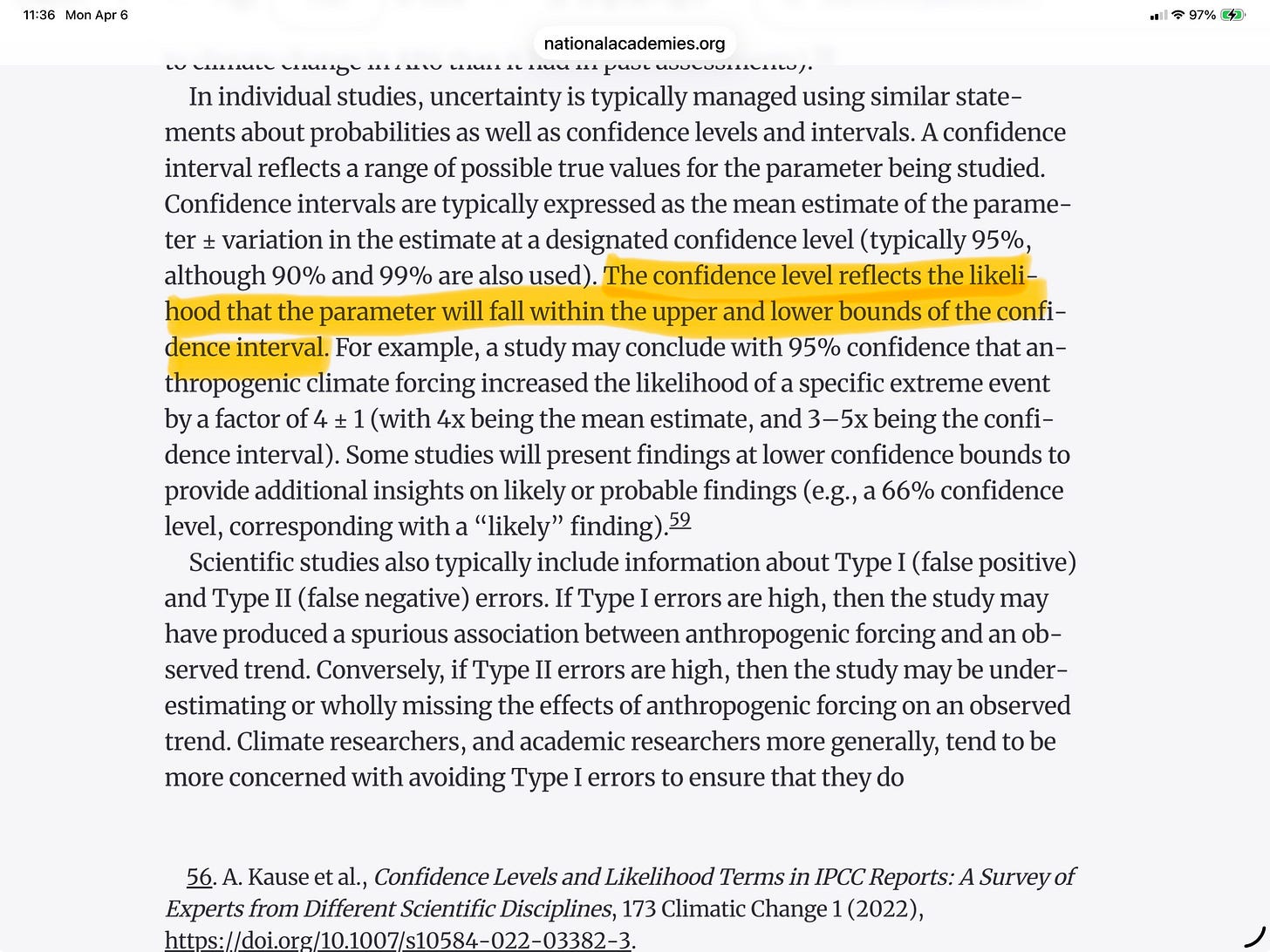

A confidence interval is a basic statistical measurement reported in statistical studies in general, including in climate science studies. The climate reference guide asserts that “a confidence interval reflects a range of possible true values for the parameter being studied.”

This statement is a common statistical fallacy. In classical statistical inference (as opposed to Bayesian statistical inference that uses a different concept, the credibility interval), there is only one true parameter, not a range of potential true parameters. Assuming the statistical model is correct, the researcher’s primary job is to estimate the value of that parameter as accurately as possible and also to establish whether the estimated parameter is statistically significant. The true parameter may be in the confidence interval or it may not be. There is no range of potential true values.

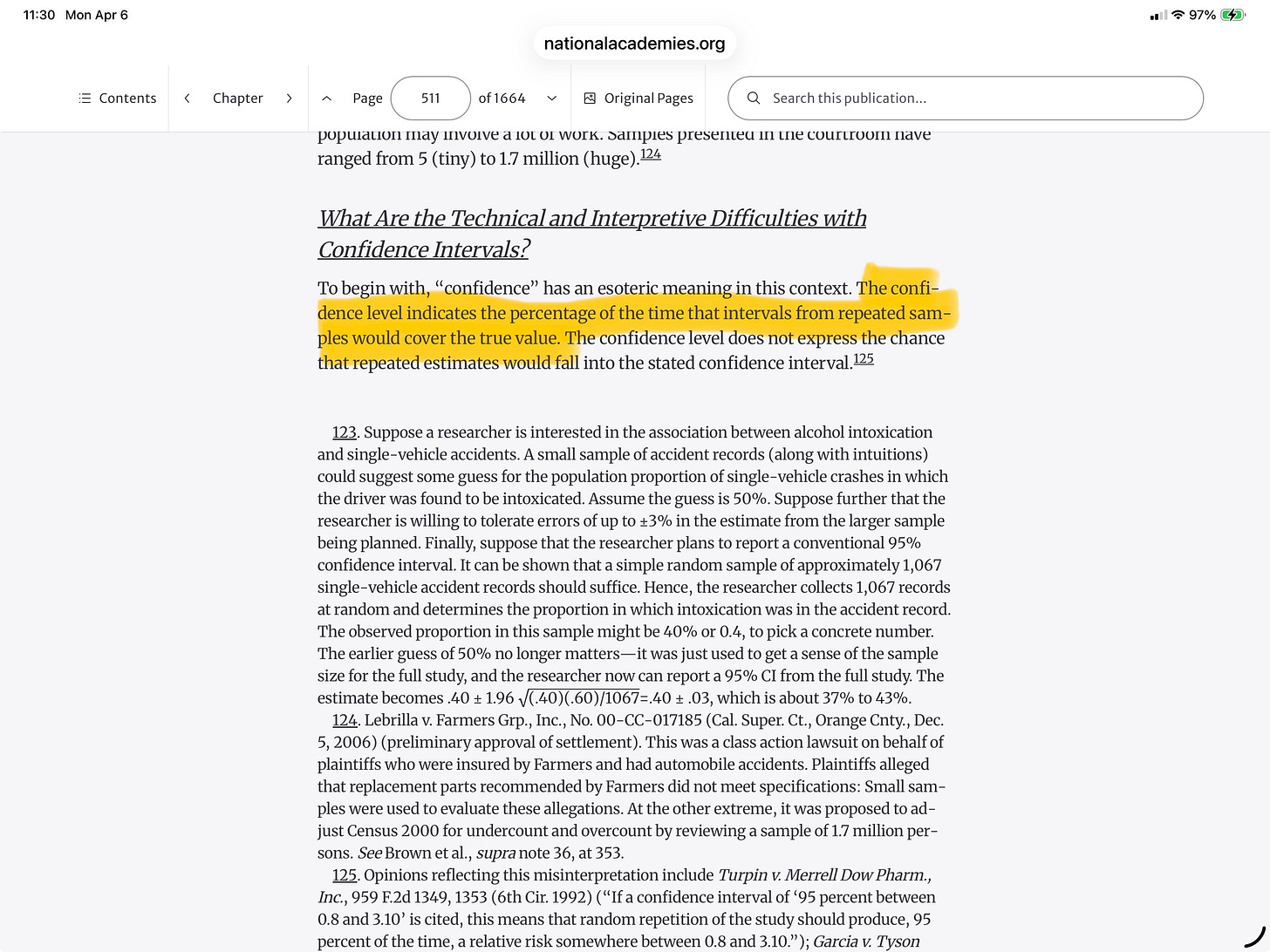

As the reference guide on statistics correctly points out, the level associated with the confidence interval (such as 95%) is the percentage of the time the true parameter would fall into the confidence interval if the confidence interval could be re-estimated over and over in a hypothetical world in which the random influences change but the statistical relationship remains constant. In any particular estimation of a confidence interval, the true parameter may be in it or it may not be.

The statistical fallacy proposed by the climate reference manual would suggest to judges that a confidence interval has much more information than it really does. The judge is told that the confidence represents the range of potential true values when in reality there is no guarantee that the single true value is actually contained in the confidence level.

Statistical Fallacy #2

The reference guide goes on to propose another statistical fallacy, that “the confidence level reflects the likelihood that the parameter will fall within the upper and lower bounds of the confidence interval.”

That’s false. Confidence intervals don’t express probabilities about what the true parameter might be. If a judge happened to peruse the reference guide on statistics in the 2025 manual, he would see the correct statement.

The reference manual on statistics explains further:

Statistical Fallacy #3

The climate reference guide for judges claims that a p-value is the probability that some hypothesis, such as that there is a trend in global surface warming, would occur by chance alone. So, in this example, a p-value of 5% is supposed to be telling judges that scientists have determined that there is only a 5% chance that the trend could have occurred by chance.

That is false. p-values do not tell you the probability that the null hypothesis—in this case that there is a trend in global surface warming—occurred by chance. Again, if a judge thumbed to the reference guide on statistics, he would see the p-value carefully and correctly defined

I would add to the reference guide on statistic’s definition that the p-value is the probability of getting data as extreme as or more extreme as the actual data not only given that the null hypothesis is true, but also given that the statistical model is correctly specified, and given that other technical conditions are met. That’s a lot of ifs, of course, but they also need to be investigated in a thorough statistical study.

The climate reference guide gets the null hypothesis backwards when it suggests that the trend in global warming would be the null. In a typical statistical study, a parameter representing the existence of the trend would be assumed to be zero in the null hypothesis. In other words, the null hypothesis would be that there isn’t a trend rather than there is one. A p-value only expresses the probability that the data you observe is inconsistent with the null hypothesis. The p-value doesn’t tell us the probability the null hypothesis (that there isn’t a trend) is true, or the probability that the alternative hypothesis (that there is a trend) is true, and it doesn’t give us the probability that the null hypothesis can be explained by chance.

The climate reference guide is making false claims about what p-values mean, and has chosen a backwards example that would make it appear to judges that a p-value would indicate high probability that an effect in question exists when a p-value doesn’t say that at all.

Another Statistical Misinterpretation

This important section in the climate reference guide ends with another key misinterpretation: that p-values express scientists’ aversion to false positives/Type 1 errors. There’s a lot more to it than that.

As has been widely noted, there is an ongoing replication crisis in scientific research, a problem that is particularly acute in statistical studies. As Columbia statistician Andrew Gelman and a co-author have pointed out:

It’s all too easy to get a statistically significant result that is not really there, even if the p-value is low. Raising the p-value makes that problem even worse. It’s not scientists’ inherent conservatism that demands low p-values, but rather that low p-values are just one of many safeguards that are necessary to avoid reporting false statistical results. Low p-values, of course, don’t guarantee the result is probably correct.

That statistical studies with low p-values are routinely incorrect suggests another reason that judges should be cautious when climate-related statistical evidence is presented as “likely” in IPCC parlance. As we saw in the example in Part 1, an IPCC “likely” rating might reflect an increase in the implied p-value in a statistical study. The higher the p-value, the easier it is to report a false result that is much more likely to be false than the p-value would lead you to believe. Judges should be wary of claims that standard p-values of 5% or 1% in scientific research are too conservative for a preponderance of the evidence legal standard.

Is the Climate Reference Guide Objective?

It should be noted also that the statistical errors and misrepresentations we’ve identified are reminiscent of mistakes in restaurant bills, which oddly always seem to be in favor of the restaurant. The statistical errors, if adopted by judges, would make it easier for plaintiffs to prove the existence of climate effects using statistical evidence, raising questions about the climate reference guide’s objectivity.